Over the years we’ve all seen several promising standards efforts fail to bear timely fruit, consuming huge amounts of valuable volunteer time and energy in the process. I posit that this is a relevant problem for at least some of the industries represented at BBQ, and worthy of careful thought inside those industries.

Therefore, let the Big BBQ Brain think together upon: When to Standardize, vs. When Not To? Each path has its peculiar advantages and disadvantages which some people understand well but others don’t, particularly. A BBQ Workgroup Report gathering knowledge on this subject could, perhaps, have practical use as inception-time advice for future efforts by helping them to choose whatever path’s best for the particular project.

Discussion

Under certain circumstances standards development can be slow and contentious, and therefore frustrating. Participants may burn out, then drop out, making subsequent progress even slower. Sometimes standards efforts fail as a result.

When progress toward any important thing is perceived as excessively process-heavy, technical people naturally become impatient and seek a faster workaround… and start thinking of open-source projects etc. … but this is also not always a perfect solution. After the feel-good launch and coding-party stages, the practical end results from that path don’t always display quite the required level of technical rigor, nor succeed quite as widely, nor attract quite the kinds of companies needed, nor exhibit quite the kind of technical stability over time that a large market may require.

A timely and good quality standard from a recognized standards development organization that’s created by major relevant companies can, by contrast, powerfully succeed and prevail in the market for many years, even as individual vendors come and go. And for the right kind of project with the right individual participants, the fluidity of an open source project is absolutely the best and most productive way to go.

What exactly is it about a given project that makes it likely to fail as a standard, or fail as an open-source project? This topic is all about characterizing the two ways, and characterizing projects.

Key Questions

- How to funnel precious volunteer-hours toward more (vs. less) productive outcomes?

- What are the characteristics of successful standards efforts?

- What are the characteristics of unsuccessful standards efforts?

- What characteristics make something other than a standards effort – for example, an open-source project, or establishing a new community – a more effective path for a given project?

- What does taking a standards path achieve that other approaches (open-source, etc.) don’t, or can’t?

- What does taking a non-standards path achieve that a standard doesn’t, or can’t?

- What about IPR models?

- Is there anything standards bodies could be doing differently to help troubled projects succeed

- Which of standardization’s many inconveniences are simply unavoidable?

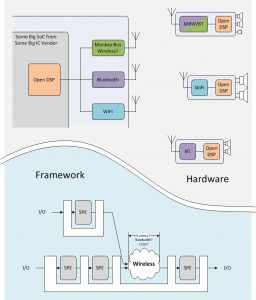

- How about hybrid models, for example combining standardized specifications with open-source implementations?